CloudBase

How Eric cut CloudBase’s API latency from 1.2s to 180ms

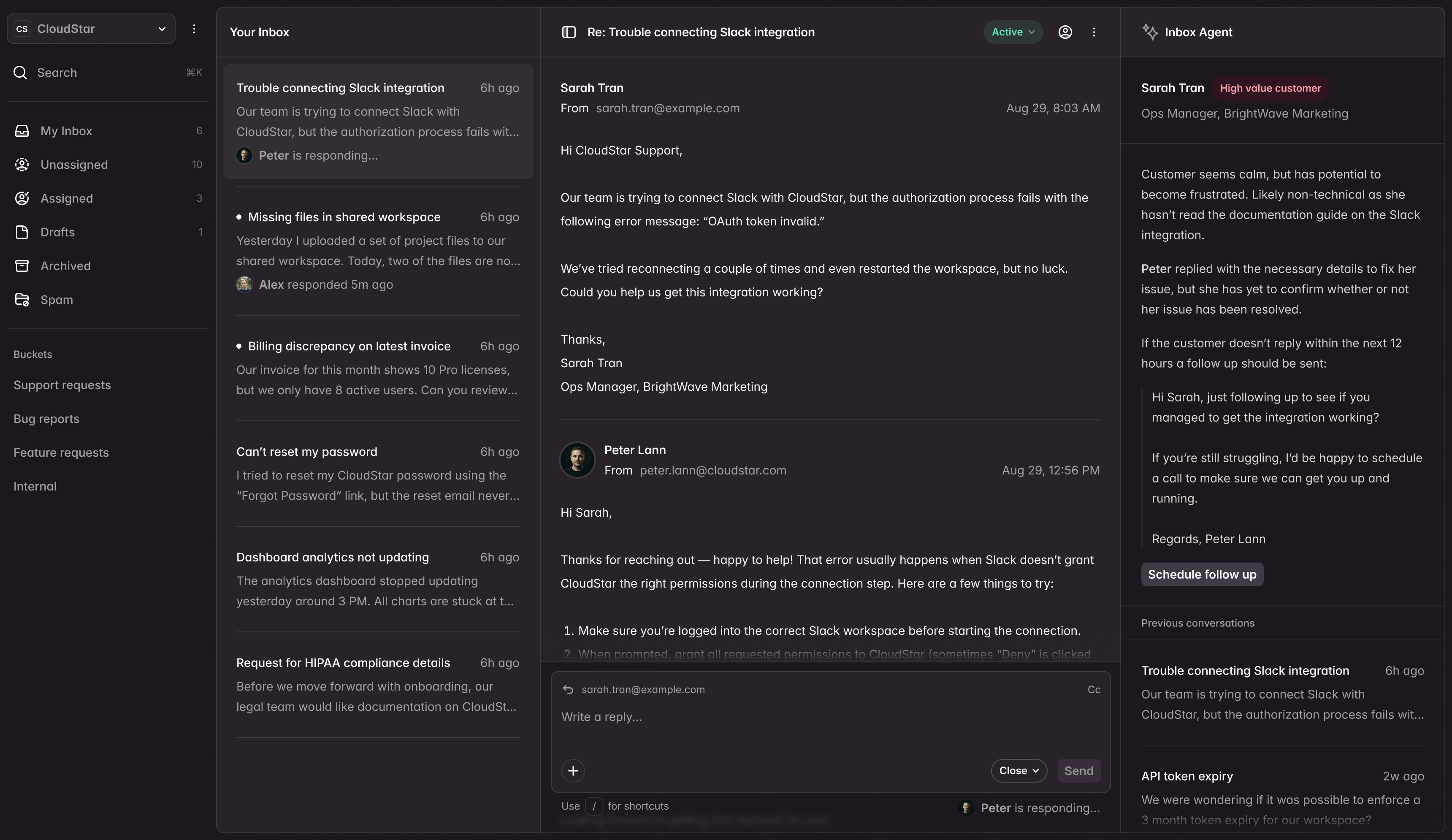

CloudBase’s customers were complaining about a sluggish dashboard. The API powering it had gradually slowed to a crawl over six months, and the team couldn’t pinpoint why.

- 85%

- Faster API responses

- 6

- Queries per request (was 47)

- < 1 mo

- Time to results

The challenge

CloudBase's dashboard API endpoint aggregated data from multiple microservices and a large PostgreSQL database. Response times had crept from 200ms to 1.2 seconds over six months as data volumes grew. Customers were churning, citing "the dashboard is unusable."

The engineering team suspected the database but couldn't isolate the problem. They'd already added indexes to the obvious columns and upgraded their database instance — neither made a meaningful difference.

The approach

Eric deployed distributed tracing across the API gateway and all downstream services. The traces revealed something the team hadn't considered: 70% of the latency came from N+1 query patterns deep in the ORM layer. Each dashboard widget was triggering 15–20 individual database queries instead of batch-loading the data it needed.

He refactored the data access layer to use eager loading, eliminating the N+1 patterns entirely. For the most expensive aggregation — a real-time usage summary that touched millions of rows — he introduced a materialised view that refreshed every five minutes.

The results

API latency dropped from 1.2 seconds to 180 milliseconds — an 85% improvement. Database query count per request dropped from 47 to 6. The dashboard felt instant, and customer satisfaction scores improved within the first month.

CloudBase's CTO later noted that the tracing infrastructure Eric put in place caught two more regressions before they reached production, saving the team weeks of debugging.

Ready to see similar results?

Book a free 20-minute call to discuss your performance challenges. No obligation.